There exists a pervasive myth in the tech industry: that usability and security are mutually exclusive. A widespread notion that making something secure inherently makes it hard to use, and making something easy to use inherently makes it less secure.

In fact, the opposite is true. Good user experience design and good security cannot exist without each other. A secure system must be controllable, reliable, and hence usable. A usable system reduces confusion and limits unexpected behavior, leading to better security outcomes.

So why does it feel like never the twain shall meet? What holds us back from building security systems that are simple, straightforward, and easy to use?

Culture first

Everyone deserves to be secure, whether or not they’re experts in tech or security. This is a perfectly noncontroversial ideal. But if everyone deserves to be secure without being experts, then we need to stop expecting everyone to become security experts just to participate online.

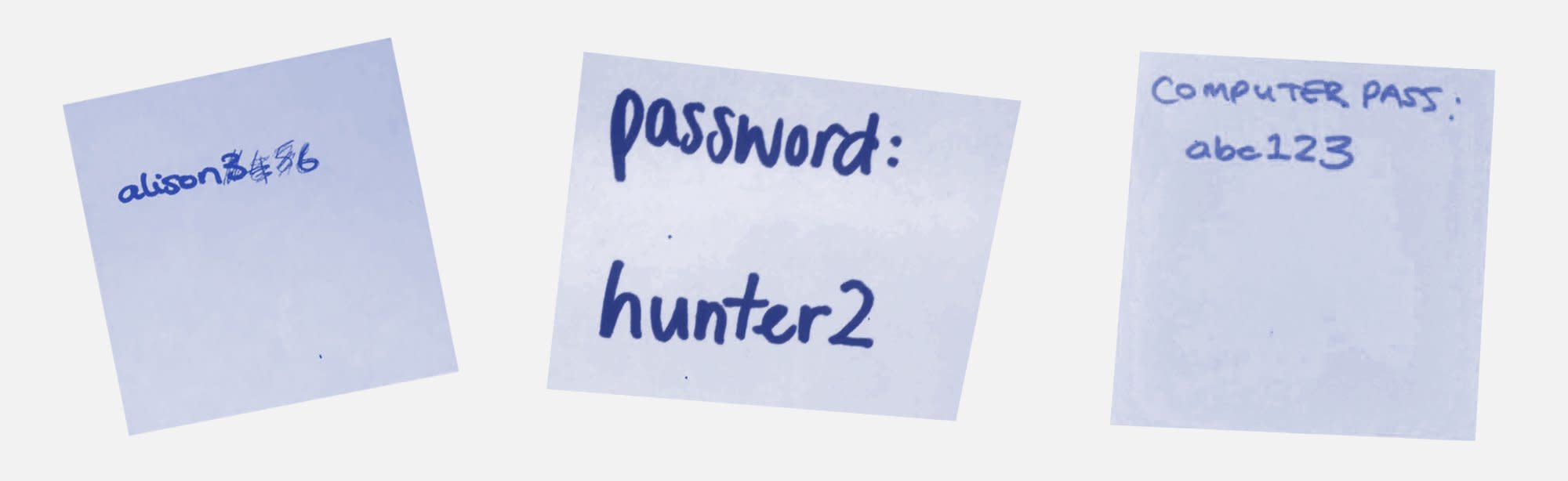

In tech, and especially in security, our entrenchment in the everyday nitty-gritties of technology can disconnect us from the lives, priorities, and behaviors of others who don’t have the technical prowess that we might. We’ll shame them for reusing the same password for all of their accounts. We’ll laugh at people posting photos of their credit cards online (and we’ll repost them, making their situation worse). We’ll tell non-technical people to use extremely technical tools (:cough: PGP :cough:), and then belittle them when they can’t figure it out.

While it’s understandable to be frustrated by the abundance of bad security practices, this culture of shame is unhelpful. And because we work in tech, it’s lazy. Someone wanted to complete a task, and we, as the people who build the very systems they use, failed to provide them with a safe and easy way to do so. It’s time we do better by the people we build things for.

Design as a tool

Perhaps we respond to bad security decisions with such incredulousness because we assume that security holds the same amount of weight and attention with the everyday person as it does with us. So it’s worth noting well and it’s worth noting early: No one cares about security. Sure, everyone cares in theory, but for most, it’s rarely top of mind. This is old news.

“Given a choice between dancing pigs and security, the user will pick dancing pigs every time.”

—Gary McGraw and Edward Felten, Securing Java: Getting Down to Business with Mobile Code

People don’t care about security, often for rational reasons. For example, passwords are notorious for being difficult and unfriendly. On top of that, most large financial institutions take on the liability of fraudulent logins. Other companies often monitor and block unusual behavior. A simple cost-benefit analysis doesn’t make a good case for putting in all that effort to create and remember dozens of unique, strong passwords.

So no one cares about security, but that’s okay. Because they shouldn’t have to.

We should.

It’s our job to build accessible, performant, and secure experiences for everyone. That’s the baseline, whether we’re security experts, developers, or designers.

One of the most valuable lessons I’ve learned is that our jobs are about outcomes, not outputs. It doesn’t matter how beautiful, correct, or “perfect” something is if no one wants to use it. That’s where design comes in. Design concerns itself not just with visual beauty, but with usability, first and foremost. Think of design as another tool in your problem-solving toolbox—a tool that allows us to stop saying “no,” and to learn how, when, and to what to say “yes.”

Saying “yes”: How, when, and to what

There are four approaches to thinking about security problems through the lens of behavioral design:

Paths of least resistance

Determining intent

Curbing miscommunication

Matching mental models

On the whole, these approaches are designed to take you out of your daily life, centered around technicalities, and put you into the shoes of your end users.

Let’s start with an exercise to shift your thinking from what can be done in your systems to what will be done.

Paths of least resistance

In security, we’re used to putting up walls.

But it’s increasingly apparent that tossing challenges and decisions at end users whenever there is the possibility of risk is simply not effective.

If we approach security through a design thinking lens, we can stop thinking about building walls and start thinking about carving rivers. We can direct people in such a way that the path of least resistance matches the path of most security.

The zero-order path: Doing nothing

In many ways, we are already doing this. Consider the “secure by default” principle. Security by default is simply the path of least resistance: What happens when one does nothing? It can be as simple as defaulting to the least-privilege, least-risk option or setting, incrementally stepped up as the user needs it.

First-order paths: Doing something

Make security actions a natural part of any process. No one likes to be given homework, especially when they’ve got better things to do.

Think about what you do when you plan your day. On a good day, you probably try to avoid the annoyance of nonstop context switching; perhaps you try to group tasks that require similar brain modes together. (You might, for instance, avoid scheduling a meeting in the middle of your focus time, because it could ruin that entire block of head-down work.)

Approach security actions with the same organized mindset. For example, if you need your end user to set up MFA, make it a natural part of the sign-up process—don’t count on them digging through your settings menu later. If someone has already committed to a long setup process, completing one more task is likely not too arduous. (Consider how exhausting three back-to-back meetings about different topics can be, while a whole day dedicated to a single project can be revitalizing.)

If we want users to make good security decisions, we must keep their goals and our security goals aligned, and plan and build accordingly. When these goals become misaligned, people start subverting security measures, both unintentionally and intentionally. Make it easier by grouping similar tasks, and tasks that require similar mindsets, together—it’s natural for us to be economical with our physical and mental resources.

Second-order paths and the false alarm effect

When a security process is presented as something that obstructs, rather than something that enables, people will find another path.

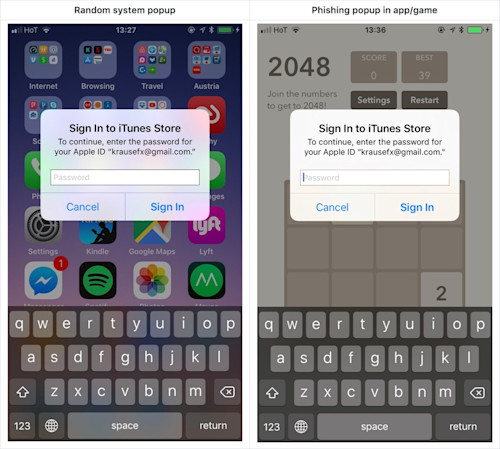

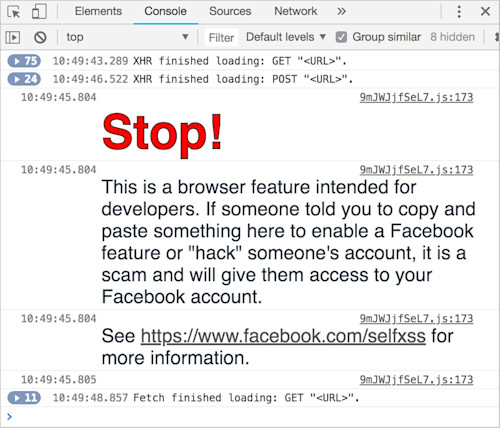

We see this all the time with self-XSS attacks. Even when we do our best to protect our users, it sometimes seems like they go out of their way to be insecure.

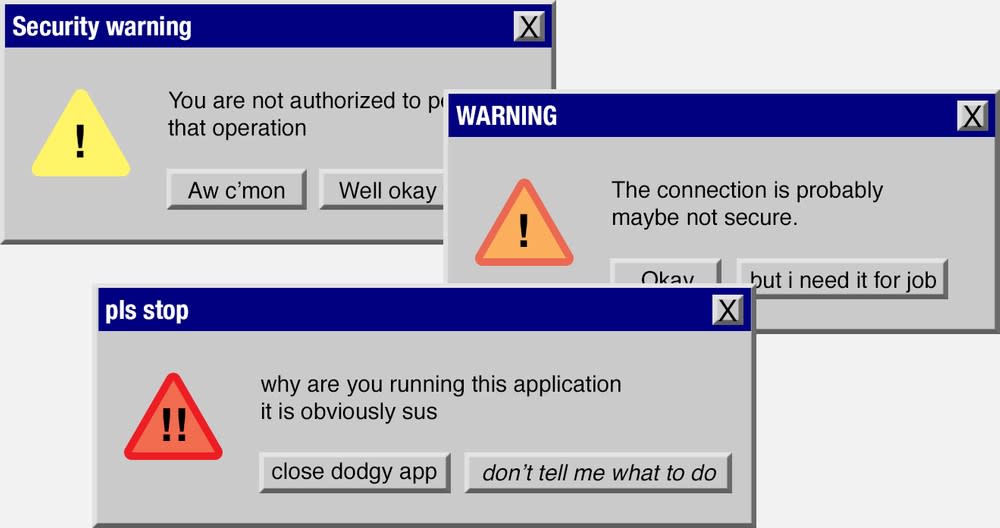

Why does introducing friction for high-risk options sometimes not work? Here, it’s not just about paths of least resistance—it’s about paths of perceived least resistance. If dismissing 10 security warnings in a row is how someone learns to get their task done, they’ll do it, every time. And the more they see these warnings, the less they truly see them at all.

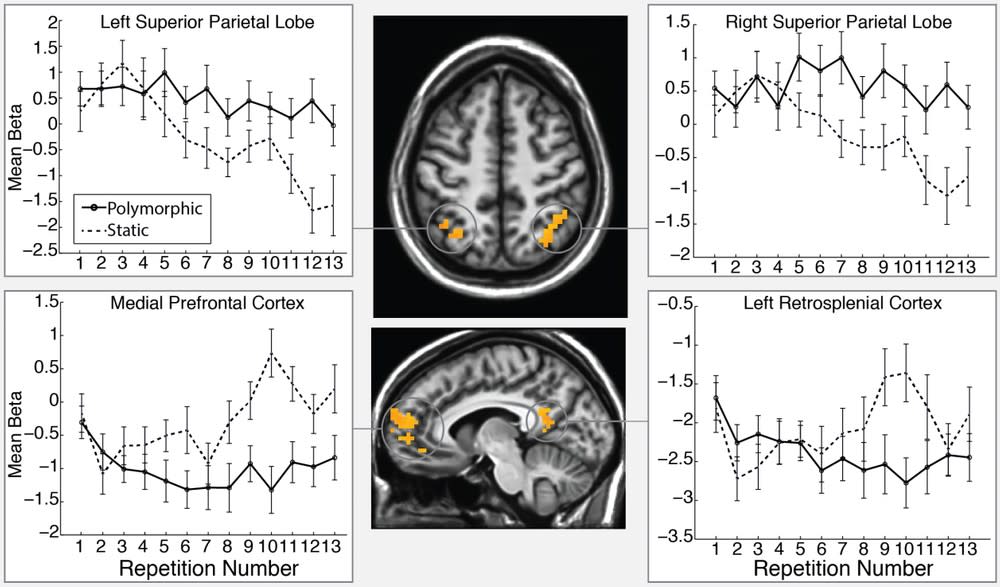

In a 2015 study, a group of researchers at Brigham Young University watched participants inside an fMRI machine as they viewed repeated security warnings. They found that the participants did less visual processing of the dialogs after as little as two exposures.

And it gets worse. It’s not just end users who ignore security warnings and due process. It’s us, too.

“Shadow IT” is when IT systems develop that aren’t approved within a company. Often, it’s employees sidestepping a process because the one sanctioned by security is too hard, or they don’t feel it’s reasonable. Shadow IT is a massive vulnerability, and it’s pervasive. Have you ever worked at a company with a password rotation policy? You know what happens, right?

If you put obstacle courses inside your product, application, or organization, people will get really good at obstacle courses. Everyone just wants to get things done.

Fixing bad paths, building good paths

One of the most common things I see in corporate offices is security tools being used for things that have nothing to do with security. For example, a company might block YouTube to deter employees from watching videos during the workday. But if an employee spends their entire day watching videos on YouTube instead of doing their work, that’s a management problem, not a security problem. If we block enough non-security threats with security tools, people will find a way to get around your firewall without feeling even a little bit bad about it.

If you want to craft good paths, use security tools as they are intended, and make the secure path the easiest.

An example of this is the BeyondCorp security model, developed by Google in response to an advanced persistent threat in 2008. Traditionally, there are security boundaries like “on network” and “off network,” where everything inside the network is assumed to be trusted, and everything outside of it is not. The BeyondCorp model removed these security perimeters entirely. Essentially, they put their entire work network on the internet.

When your entire work network is on the internet, securing it all becomes harder than simply declaring some things to be absolutely secure and other things to be absolutely insecure. You need to reliably authenticate your users and reasonably map out their application use and data access. And, most importantly, you need to cater to your employees well enough that they don’t sidestep any of the new security policies.

And that’s what made this model so successful. It wasn’t just the technicalities of how it worked—there was a strong focus on user experience when it came to implementation.

“We designed our tools so that the user-facing components are clear and easy to use. […] For the vast majority of users, BeyondCorp is completely invisible.”

—Victor Manuel Escobedo, Filip Zyzniewski, Betsy Beyer, and Max Saltonstall, “BeyondCorp: The User Experience”

And that’s how security should be. Clear, easy, and invisible.

Align your goals with the user’s goals. Build rivers, not walls.

Determining intent

The tension between usability and security generally occurs when we cannot accurately determine intent.

You need to determine what level and flavor of security is most appropriate for any given scenario or user. A one-size-fits-all security solution simply doesn’t exist. Instead, ensure that you have a clear view of your users’ goals and intentions. This will help you lay a strong foundation for how you approach every problem and each step of building your app, product, or organization.

But inferring intent is notoriously difficult, so instead of thinking about intent and desired outcomes, we usually fall back on familiar patterns. Like: “I’m a designer and usability is my responsibility, so I need to make everything easy.” Or: “I’m a security expert and security is my responsibility, so I need to lock everything down.” Too often, we make it about what we think we are supposed to do instead of what the user is trying to do.

In reality, our job is not to make everything easy. Our job is to make

a specific action

that a specific user wants to take

at a specific time

in a specific place

easy. Everything else, we can lock down.

Therefore, tightening security without sacrificing usability requires us to know intent.

It’s easier said than done, but we can start with simple heuristics. Who is the end user? Obtaining better clues about user intent can be as simple as making sure our authentication systems are robust and trustworthy. When does this user take a specific action? Where are they? Can we better model user behavior around time and location? Are your sources for this data too easily spoofed? (And is it actually necessary for us to have this data? What’s the minimum we need to do our jobs?)

We can go further, too. What actions does this user take regularly? What is unusual about their behavior? Do we expect this user to take these specific actions? If not, can we raise a password challenge? A 2FA challenge?

While we should do our best to infer end user intent and deliver to those intents, it is equally important not to become overly confident. Chances are our users know their risk profiles and threat models better than we do (even if they might not know that terminology), and it’s important that we recognize that.

In security, we often think about solutions for the worst cases, rather than actual cases, which can cause a disconnect between expert security advice and security advice that users respond to. Designing for the most common intentions first helps bridge that gap. In any accurate model of user behavior, complete randomness is an outlier, and something that requires significant effort to pull off. For more erratic users, leverage secure-by-default principles as a backstop. If their behavior is consistently unusual, regularly raising authentication challenges is fine.

The takeaway: Next time you get caught in an inevitable battle between security and usability, take a step back and look for ways to clarify intent.

(Mis)communication

I want you to think about communication differently. It’s not just a mushy, fleshy, human-based IO that’s strange and subjective. Miscommunication is a human security vulnerability. Social engineers and phishing attackers exploit areas of ambiguity, and miscommunication is ambiguity at its finest.

Ask yourself this: What are you unintentionally miscommunicating?

A few months ago, Google announced that they would no longer display green “secure” locks in their Chrome browser, but would instead highlight websites without (or with invalid) SSL certificates. In essence, this is good: Security should be largely invisible, and only attention-grabbing when something is wrong.

Those of us who work in tech generally know what that “secure” lock means: Our communications with the domain name displayed are encrypted, and the domain displayed is what it says it is (according to some mysterious, wizard-robed certificate authority).

But if you don’t know the finer details, what might you reasonably surmise that green “secure” lock means?

You shouldn’t be faulted for thinking it means secure. But these “secure” pages aren’t necessarily so; this is a point of miscommunication. And whenever there is a miscommunication, there exists a human security vulnerability.

Let’s say I got bored one night and felt like doing some crime. If I were sufficiently motivated, I could buy a legitimate-sounding domain name. I could nab a free SSL certificate. And with my 1337 haXXor skills in HTML, CSS, and copy-pasting nameservers, I could set up a very convincing phishing site… that the browser explicitly labels as “secure.”

There are ways to do better. One of those ways is through testing.

Every so often, pentesters will come in and test applications for vulnerabilities. It’s a crucial step before releasing anything substantial, and it’s an investment well worth making. I challenge you to start thinking about usability and user testing the same way.

User testing (not to be conflated with user research) is a later-stage UX exercise where we present customers with a near-finished design, prototype, or working app, and observe how they interact with it. It can help you see how communicative your copy is (especially as short production copy may have been drafted by a developer whose head was in the weeds) and how effective your design is. It’s an opportune time to observe whether the interfaces you’ve made accurately convey what’s happening within the system, and to spot human security vulnerabilities. Even slight miscommunications with the best of intentions can lead to bad security outcomes. Testing can help you identify these miscommunications and prevent them from becoming full-blown security incidents.

Mental model matching

The end user’s expectations define whether a system is secure or not.

Think about it: A man-in-the-middle attack isn’t inherently insecure. “Telephone” is a string of man-in-the-middle attacks, and it’s not a security incident, it’s an innocent children’s game.

Man-in-the-middle attacks are bad in our context because our users expect their data and communications to only be available to expected parties, and the party in the middle is unexpected.

“A system is secure from a given user’s perspective if the set of actions that each actor can do [is] bounded by what the user believes they can do.”

—Ka-Ping Yee, “User Interaction Design for Secure Systems”

Designing secure systems means we must understand intent—and then go a step further. We must also understand the user’s mental model of our system. How do they expect things to work?

Finding the end user model

There’s a whole world outside our tech office doors, a world full of people who use what we build. For us, tech might be both a hobby and a job, but for most, technology is a means to an end.

If we forget to step outside ourselves and create systems that consider real-world use cases, the things we make may well fail. The best way to empathize with the end user experience is to practice, and the best way to practice is to observe the end user experience.

If you have access to them, ask if you can sit in on customer interviews and user testing sessions. I cannot overstate the value of these sessions: Inevitably, every person in the room has at least one personally held assumption completely destroyed. It’s easy to think we know how customers will behave. Regularly going to user research sessions keeps you honest.

Influencing the end user model

When we create, we teach. Whenever someone interacts with a thing we’ve made, they learn. When we don’t think about the patterns we’re encouraging, the lessons we’re offering, and how our security actions influence the end user model, we create opportunities for attacks.

Ask yourself: What are you inadvertently teaching users? If we constantly bombard them with password pop-ups, are we teaching them to enter passwords any time a similar window appears? If they can bypass a security feature by canceling it 10 times, are we teaching them to ignore warnings? Are we teaching them that security is an obstruction?

“Is this secure?” is a meaningless question without first defining who it’s secure for, in what contexts it operates, and what the user expects security to mean.

Seek to understand your user’s mental model for your application, product, or organization. Speak to that model, rather than the detailed ins and outs of what you understand.

The tl;dr

While there are no hard-and-fast rules for usable security, cross-pollination among designers and security experts is essentially nonexistent—and this is a massive missed opportunity. Collaboration, or at least extending a hand across the aisle, elevates the solution spaces for all of our subfields.

Our jobs are ultimately about outcomes, not outputs. When we reach outside our comfort zones with empathy, we can go beyond our daily patterns and move toward building software, hardware, and processes that are impactful, that solve real problems.

Next time you’re caught in a tug-of-war between usability and security, ask yourself:

How can we make the easiest path the most secure one?

What is the user’s intent? Can we make better inferences?

Where are the points of ambiguity and miscommunication?

What is the user’s mental model of what’s going on?

One final anecdote

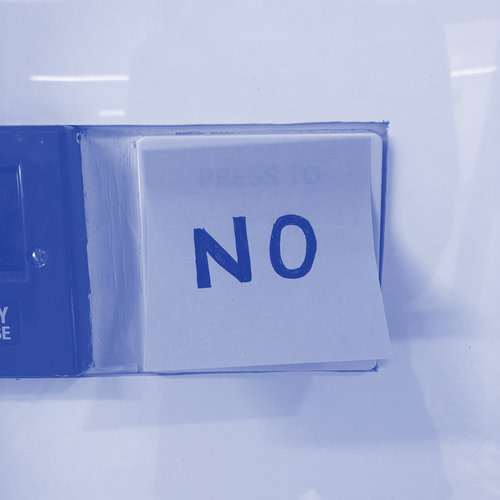

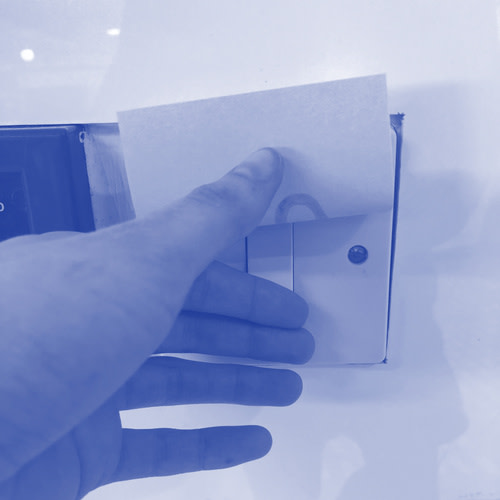

There’s a light switch in my office with a sticky note over it that says, “No.”

Can you guess the first thing I did?

Remember: Build rivers, not walls.